The minimum wage in the US in 1972 was $1.60. Average rent that year was about $120 a month, meaning that a worker could work 75 hours a month and make rent and have roughly $136 left over (before taxes) to pay for food and other necessities. Adjusted for inflation, the cost of rent should be $540. It’s at least twice that much in most states on average — $1100. That means on average a worker would have to work 152 hours a month (before taxes) and have just over $57 left over to pay for food and life necessities. You couldn’t feed one person for that even if they ate only Top Ramen for every meal. And you wouldn’t be able to cook it because you could not afford the utility bills (the average cost of utility bills [which used to be included in the rent] is more than $200, putting the average minimum wage renter well into the red just for basic survival.

If minimum wage would have kept pace with PRODUCTIVITY since the 1970s, it would be more than $20 an hour. This is important because this is the floor for compensation which affects all wages. If minimum wage was $20, the lowest paid workers would make, conservatively, $3200 a month or $38,000 a year. That’s instead of $13,000.

Adjusted for inflation, $1.60 is worth $11.98 today, $4.73 an hour more than it is today. The real insult is that American workers are nearly twice as productive as they were in 1972 and are compensated at a lower adjusted rate than they would have in a vacuum of equal productivity.

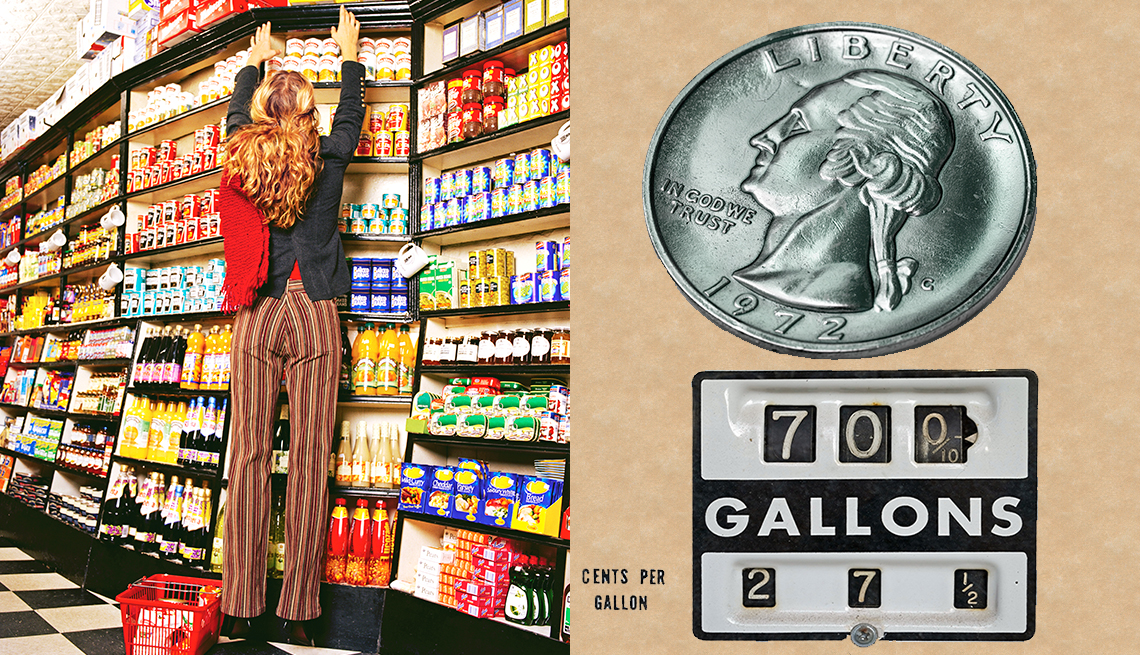

Prices have changed dramatically since 1972, with inflation impacting costs. Some items are surprisingly cheaper today, while others have soared in price.

www.aarp.org